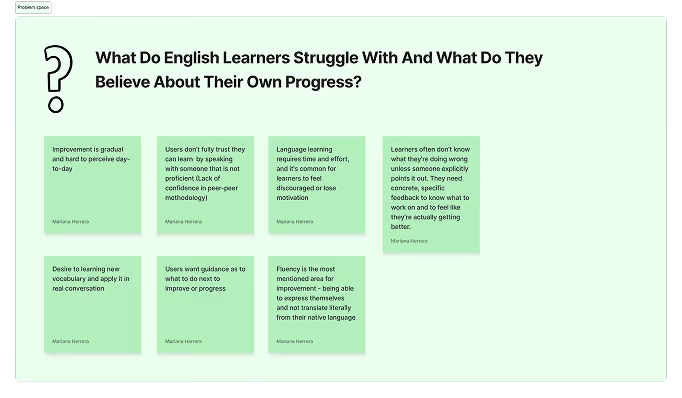

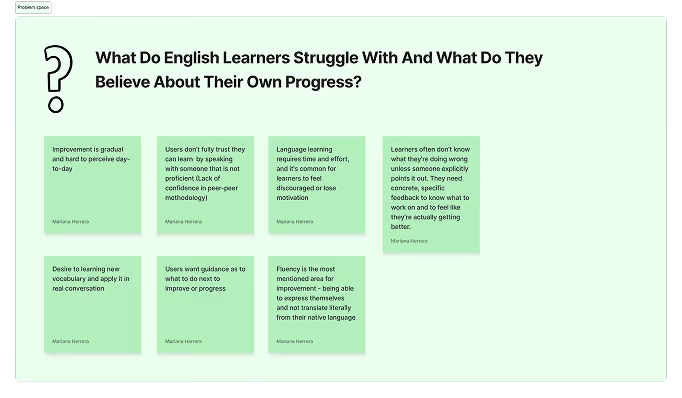

A recurring concern among users was a lack of trust in the peer-to-peer methodology. Learners frequently asked whether a teacher-led option was available and worried about how they could truly improve without corrections from a proficient English speaker. This was a significant barrier to adoption. Leap is built on the premise that practice is the most effective way to learn a language, so the product initially focused exclusively on live conversation sessions, but this concern couldn't be ignored.

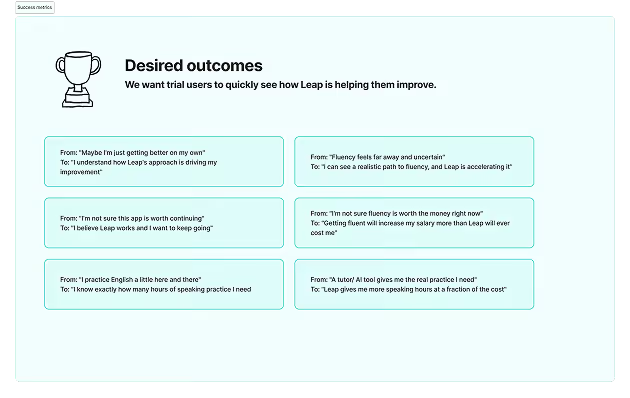

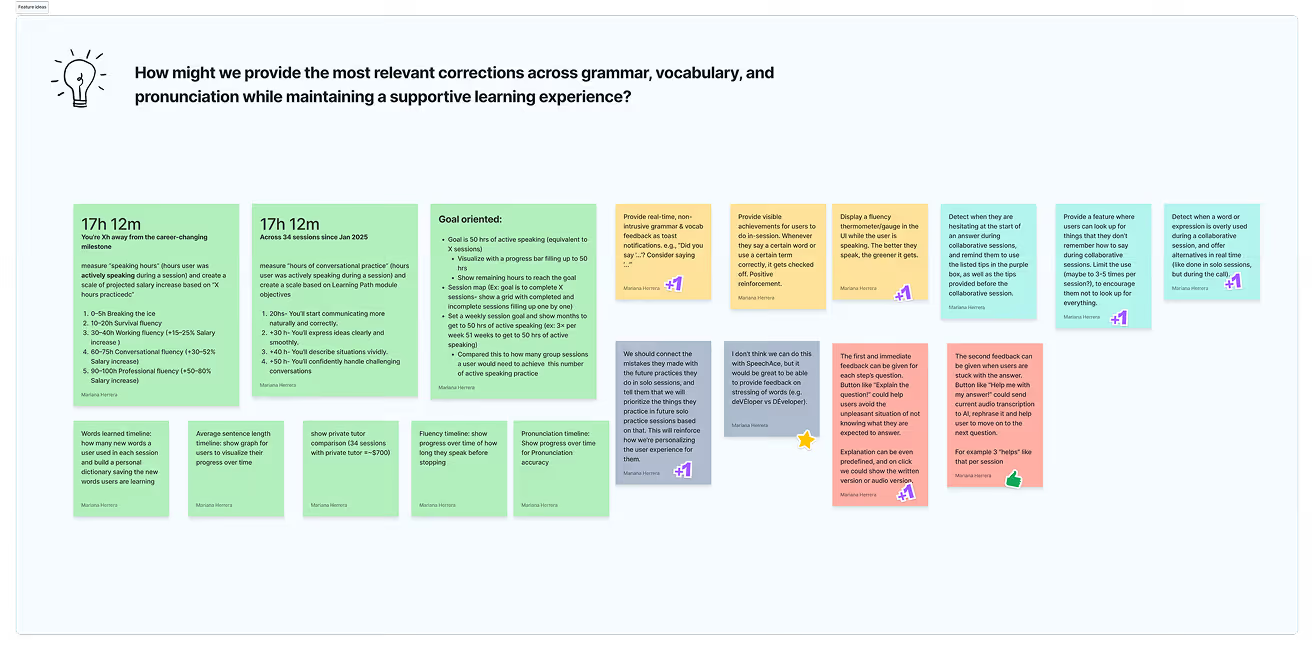

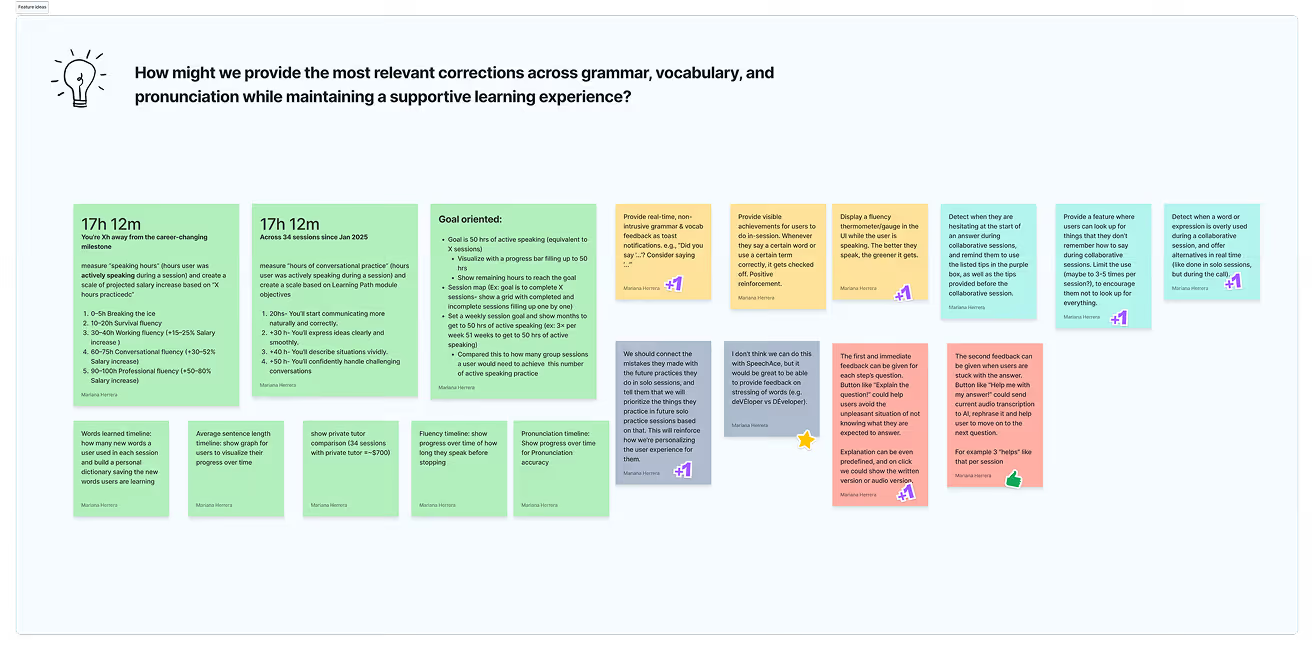

The central design challenge became: how do we give users feedback that feels personalized and makes them feel like they learned something and are genuinely improving, even when practicing with a fellow learner?

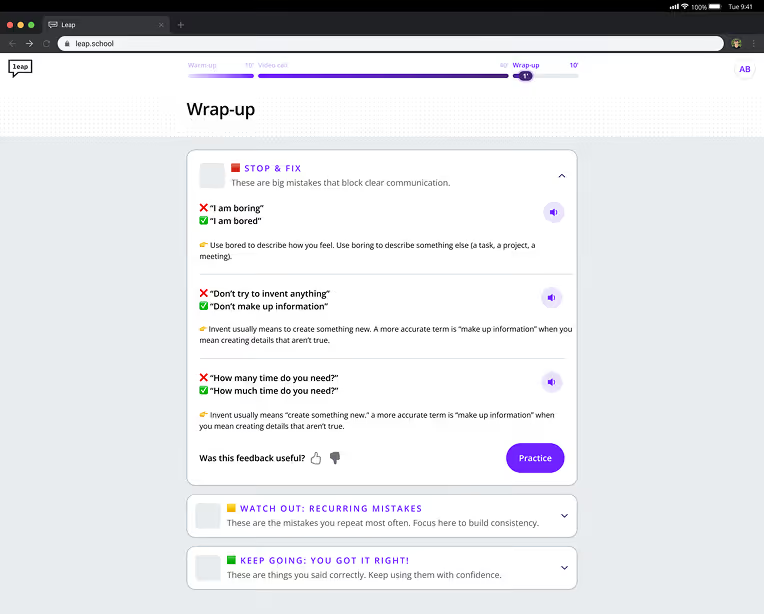

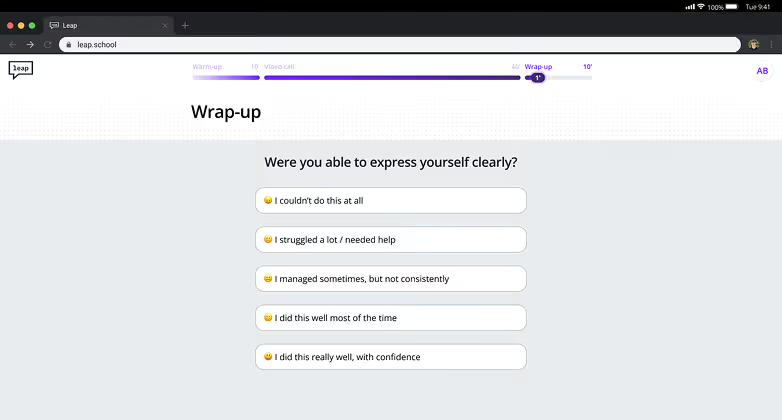

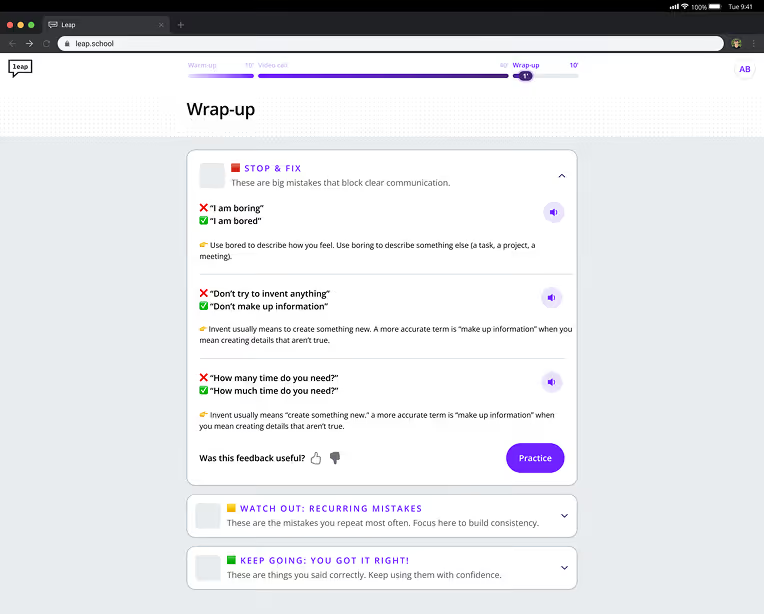

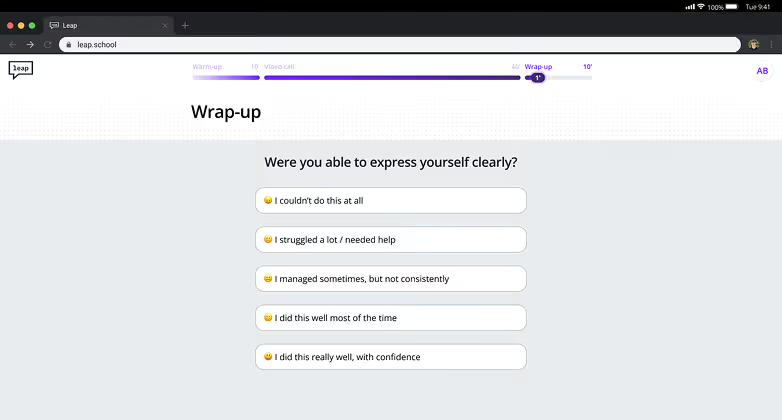

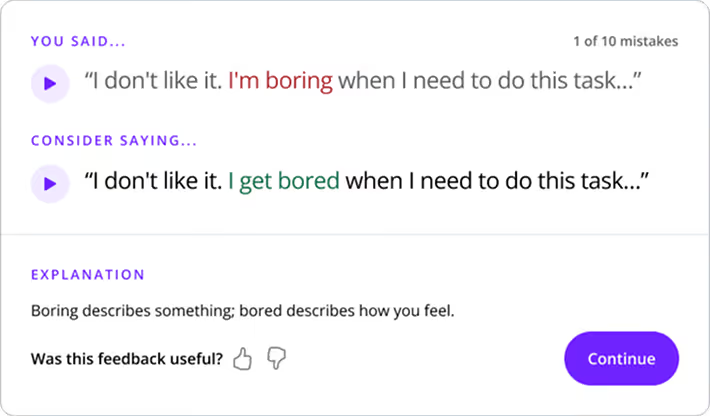

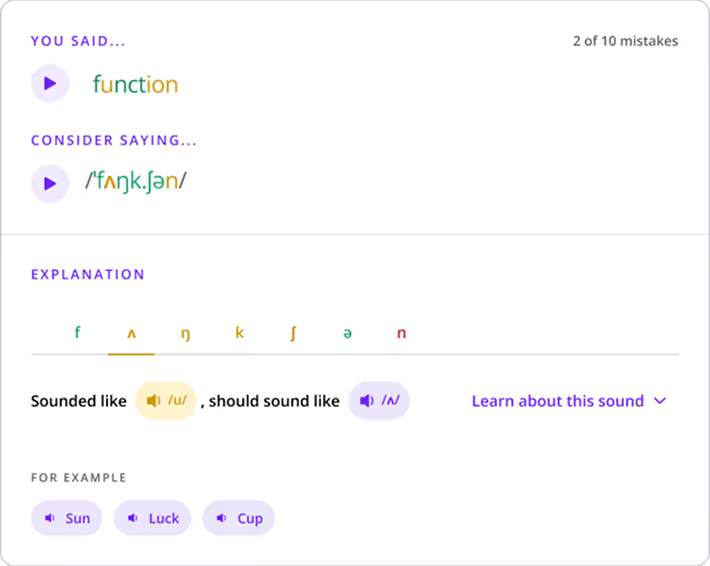

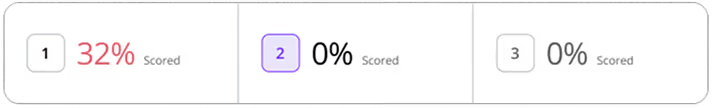

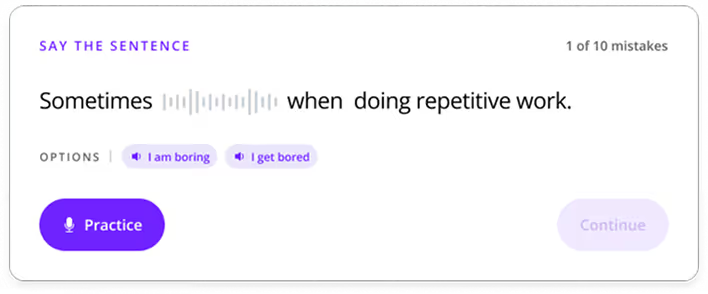

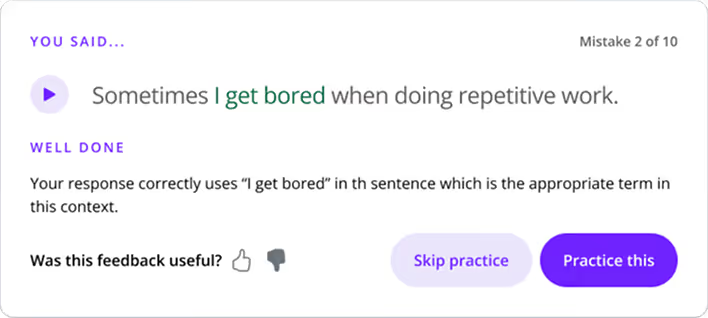

To address this, we designed AI-powered session feedback. Sessions are recorded and analyzed to surface corrections based on the actual mistakes users made during the conversation. At the end of each session, the system surfaces the top 10 most critical mistakes with clear, actionable corrections. Getting there required extensive testing, both with users and through careful refinement of the AI prompts to ensure the feedback felt relevant, actionable and digestible while striking an encouraging, non-discouraging tone.

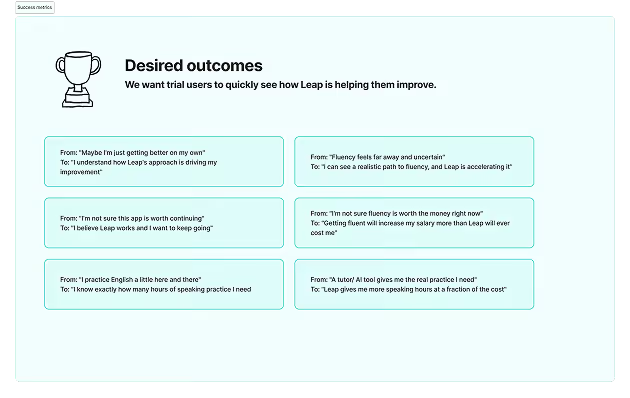

The result exceeded expectations. This feature became the second most loved part of the product, second only to the video call itself, and is consistently cited by users as the most helpful moment in the experience. Users who previously skipped the post-session practice are now completing it at record rates. Engagement has also grown significantly, users are spending more time on the platform than ever before. More importantly, it meaningfully shifted user trust, both in Leap as a product and in the peer-to-peer learning method as a whole.

Use specific search prompts to gather comprehensive data on companies and individuals from thousands of sources.